An In-Depth Guide to Contrastive Learning in AI

Contrastive learning is a machine learning technique that teaches models to distinguish between similar and dissimilar samples.

Picsellia Team

·7 min read

Ready to build computer vision?

Go from raw images to production models. Free trial, no credit card, cancel anytime.

An In-Depth Guide to Contrastive Learning in AI

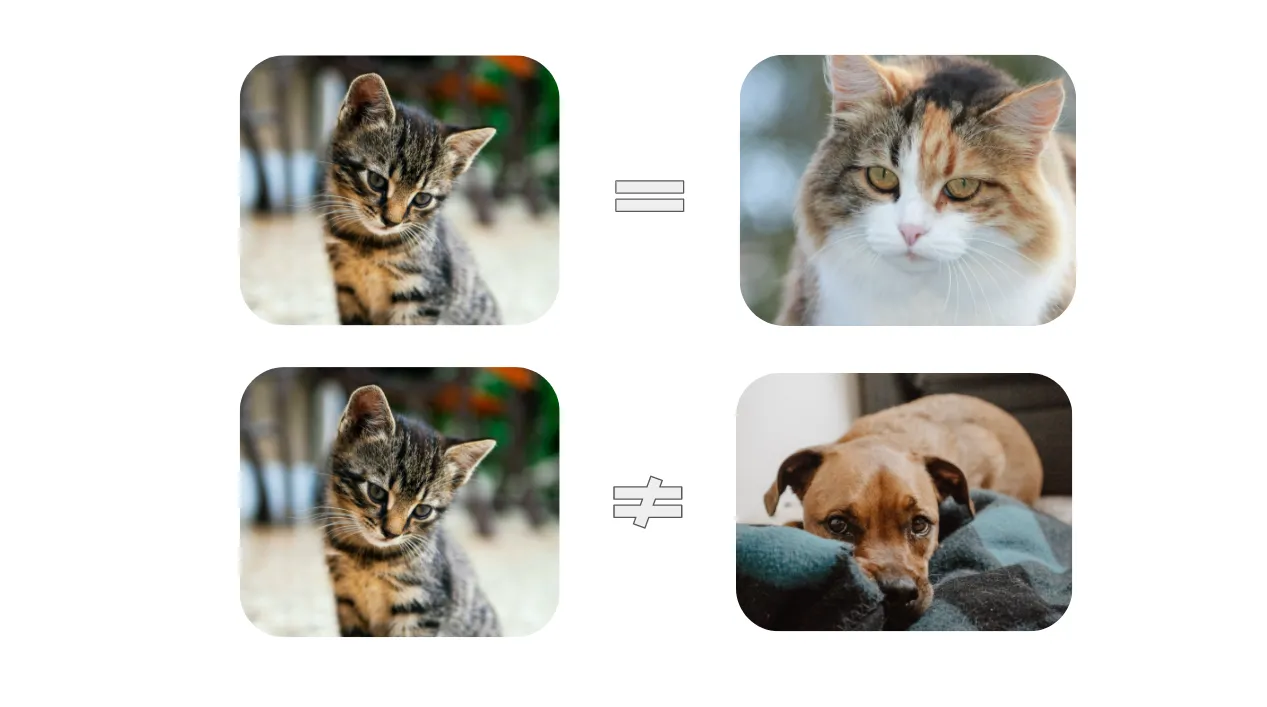

When a child is learning to recognize animals, he may struggle in the beginning, to differentiate between various animals. However, by comparing similar and dissimilar animals, they gradually learn to identify common features within a species across species.

It perfectly illustrates the concept behind contrastive learning, which aims to learn the general features of a dataset by teaching the model which data points are similar or different.

An in depth guide to contrastive learning in ai

Illustration of contrastive learning’s concept

https://towardsdatascience.com/understanding-contrastive-learning-d5b19fd96607

An in depth guide to contrastive learning in ai

Illustration of contrastive learning’s concept

https://towardsdatascience.com/understanding-contrastive-learning-d5b19fd96607

Introduction to Contrastive Learning

Contrastive learning is a machine learning technique that teaches models to distinguish between similar and dissimilar samples. By contrasting different samples, models can learn to identify common attributes and distinguish between classes.

In supervised learning, models are trained on labeled data, but contrastive learning doesn’t need labeled data, as it leverages the similarities and differences between data points to learn representations. This makes it highly scalable and useful for pre-training models in scenarios where labeled data is limited or unavailable.

This enables models to learn high-level features about the data before performing specific computer vision tasks such as image classification, object detection, and image segmentation. To learn more about these tasks, read this article: Segmentation vs Detection vs Classification in Computer Vision: A Comparative Analysis

Terminology in Contrastive Learning

To understand contrastive learning, it is important to be familiar with some key terminology. Contrastive learning aims to learn **low-dimensional representations of data **by maximizing the similarity between **similar samples **and minimizing it between dissimilar samples. This is typically done by using the Euclidean distance as a measure of dissimilarity in the representation space.

An in depth guide to contrastive learning in ai

An in depth guide to contrastive learning in ai

The goal is to bring similar samples closer together in the representation space, making them easier to distinguish, while pushing dissimilar samples farther apart. By learning such representations, models can effectively capture the underlying structure of the data and generalize well to unseen samples.

Contrastive Learning in Practice

In practice, contrastive learning involves the selection of:

- an anchor sample

- one positive sample

- several negative samples.

The anchor sample serves as a reference point. The goal is to bring the positive sample closer to the anchor while pushing the negative samples farther away. In computer vision, this is typically achieved by learning an encoder, which maps images to embeddings in a low-dimensional space.

An in depth guide to contrastive learning in ai

Illustration of how contrastive learning works in practice

(Image source: Schroff et al. 2015)

An in depth guide to contrastive learning in ai

Illustration of how contrastive learning works in practice

(Image source: Schroff et al. 2015)

The positive sample can be an image from the same class as the anchor or an augmented version of the anchor image. In case this is the chosen methodology, Picsellia provides ready to use, customizable, data augmentation processings that simplify this anchor-positive data preparation process.

The negative samples are typically randomly selected from a different class or a set of unrelated images. By optimizing the model to minimize the distance between positive pairs and maximize the distance between negative pairs, contrastive learning allows the model to learn discriminative representations.

Self-Supervised Contrastive Learning

The ability to learn from unlabeled data, called self-supervised learning, is one of the key advantages of contrastive learning.

Contrastive learning is a type of self-supervised learning, which is itself a type of unsupervised learning, which relies on how the data is defined, without relying on any explicit labeling. This is different from supervised learning, where the model is trained based on a known set of input-output pairs. On the other hand, unsupervised learning involves training the model using no labels at all.

In self-supervised contrastive learning, the positive sample is generated by augmenting the anchor image, while the negative samples are randomly selected from the training minibatch. This makes it highly scalable and useful for pre-training, allowing models to learn from large amounts of unlabeled data

However, self-supervised contrastive learning presents challenges in terms of false negatives and degradation in representation quality. False negatives refer to negatives that are generated from samples of the same class as the anchor, leading to a degradation in the quality of the learned representations. Addressing these challenges and improving the quality of negatives is an active area of research in contrastive learning.

Supervised Contrastive Learning

While self-supervised contrastive learning is effective in learning from unlabelled data, the application of contrastive learning in the fully supervised setting is relatively unexplored. In supervised contrastive learning, labeled data generates positive samples from existing same-class examples, providing more variability in pretraining than simple augmentations of the anchor.

A recent approach called "Supervised Contrastive Learning" bridges the gap between self-supervised and fully supervised learning. It introduces a novel loss function, called SupCon, that encourages normalized embeddings from the same class to be pulled closer together, while embeddings from different classes are pushed apart. This approach enables contrastive learning to be applied in the supervised setting, improving representation learning for tasks such as image classification.

Challenges and Considerations in Contrastive Learning

Implementing contrastive learning comes with several challenges and considerations such as:

- The batch size: a larger batch size allows for more diverse and difficult negative samples, which are crucial for learning good representations.

- The quality of negative samples: the model should be trained on hard negatives that improve representation quality without including false negatives.

- The methods chosen for generating positive samples: determining the most optimal one is an ongoing area of research in contrastive learning.

- The choice of augmentations used in self-supervised learning plays a crucial role in the quality of learned representations.

- Tuning hyperparameters, such as learning rate, temperature, and encoder architecture is necessary to improve representation quality and achieve better performance in contrastive learning.

Real-World Applications of Contrastive Learning

Contrastive learning has a wide range of applications in computer vision tasks:

- Image classification: models learn discriminative features that can accurately classify images into different classes.

- Object detection: improving the representation of objects.

- **Image segmentation: **learning more robust and accurate segment representations.

Contrastive learning has also been applied to **video analysis tasks **such as action recognition, where models learn to detect and classify actions in video sequences.

Limitations of Unsupervised Learning

One limitation is the need for a large amount of unlabeled data. Unsupervised learning algorithms and contrastive learning rely on patterns and structures within the data to learn meaningful representations. However, without labeled data to guide the learning process, the algorithms require a substantial amount of unlabeled data to capture the underlying patterns effectively.

Another limitation is the lack of interpretability. Unsupervised learning models often produce complex representations that are difficult to interpret and understand. This can make it challenging to gain insights into the learned features and understand the reasoning behind the model's predictions.

Additionally, while it can learn low-level features and patterns, unsupervised learning may struggle to capture more complex relationships and high-level semantic meanings present in the data.

Conclusion

Contrastive learning is a powerful technique in computer vision that enables models to learn the general features of a dataset without the need for labeled data. By contrasting similar and dissimilar samples, contrastive learning allows models to learn discriminative representations and capture the underlying structure of the data.

Picsellia's platform can be a valuable asset for this technique, as it involves numerous iterations with varying configurations to achieve improved performance. The platform offers the ability to conduct a wide range of experiments, such as using different dataset versions, sets of hyperparameters, and evaluation techniques. Additionally, it includes an experiment tracking functionality that keeps a record of all experiments, guiding users towards finding the optimal version of their models while keeping track of the datasets used.

Related from Picsellia

Train models your way

Use pre-built pipelines for YOLO, SAM2, and more — or bring your own code with PyTorch, TensorFlow, or Hugging Face.

Explore the AI LaboratoryShip vision AI 10x faster

Picsellia is the end-to-end MLOps platform for computer vision — from data management to production deployment.

See the PlatformStay up to date

Get the latest posts on computer vision, MLOps, and AI delivered to your inbox.

Related articles

From Computer Vision to Industry 4.0: How Scortex Is Shaping Automated Visual Inspection

Discover how Scortex leverages AI and computer vision for automated visual inspection, from defect detection to anomaly detection and real-time insights.

Mastering Data Annotation for AI Projects in 2025

This article will discuss the importance of data annotation in AI and the best practices and strategies for overcoming labeling hurdles.

2025 Trends in Computer Vision: What to Expect

Learn about the upcoming Computer Vision trends in 2025.