The Cost of Tool-Switching in Computer Vision Pipelines

Tool overhead isn't additive — it compounds. Learn how context switching between ML tools costs CV teams hundreds of engineering hours per year.

Picsellia Team

·5 min read

Put your MLOps into practice

Stop stitching tools together. Get an end-to-end CV platform with built-in automation.

When a 2-minute data check becomes a 2-hour debugging session

Your model's mAP drops 8% at epoch 23. The alert fires. Now you need to figure out which images in the validation set caused the regression.

Here's what that investigation actually looks like for most CV teams:

- Open your experiment tracker (MLflow, W&B)

- Find the run ID and training parameters

- Switch to your annotation platform (CVAT, Label Studio, Encord, Labelbox)

- Try to figure out which dataset version was used

- Download a subset of images to look at locally

- Realize you downloaded the wrong split

- Start over

Total time: 2+ hours. Tool switches: 6-8. Root cause found on first attempt: almost never.

This isn't an edge case. This is Tuesday.

The math gets ugly fast

Tool overhead isn't additive. It compounds.

Rough numbers:

- Time lost per tool switch: ~2 minutes

- Tools in a typical CV stack: 4-6 (storage, annotation, training, tracking, registry, deployment)

- Daily model checks per engineer: ~8

For a 5-person team:

- Per engineer: 16 minutes/day on tool transitions

- Weekly: 6.5 hours

- Annual: 325 hours, or about 8 full work weeks

And that doesn't count:

- Fixing version mismatches

- Reconstructing broken data lineage

- Recreating experiment context you lost

- Getting new team members up to speed on the mess

The bigger problem: when switching tools is painful, people skip validation steps. Unreliable models ship.

Three problems I keep seeing

1. Metadata gets lost between systems

Your annotation platform has the labels. Your experiment tracker has the metrics. Your cloud storage has the images. Each system thinks it's the source of truth.

Try answering this: when your model hit 91% precision, which images had a specific business tag?

If your tools aren't integrated, that question doesn't have an answer. The link between training data and model performance breaks at system boundaries.

2. Everyone downloads everything

"Let me pull down the validation set to investigate" becomes the default.

Now you have:

- Something in S3/GCS (version unclear)

- A copy on your laptop (/Downloads/val_set_v3_final/)

- Another copy on a teammate's EC2 instance

- Training data cached on GPU boxes

When something breaks, figuring out which dataset the model actually used becomes detective work.

3. Context switching kills deep work

Each jump between IDE, experiment tracker, annotation platform, notebook, and cloud console costs roughly 15 minutes of context reload. Not working time. Time spent:

- Remembering where you were

- Logging into different UIs

- Mapping between different data representations

- Remembering why you switched tools in the first place

These interruptions add up. Less time for actual model work.

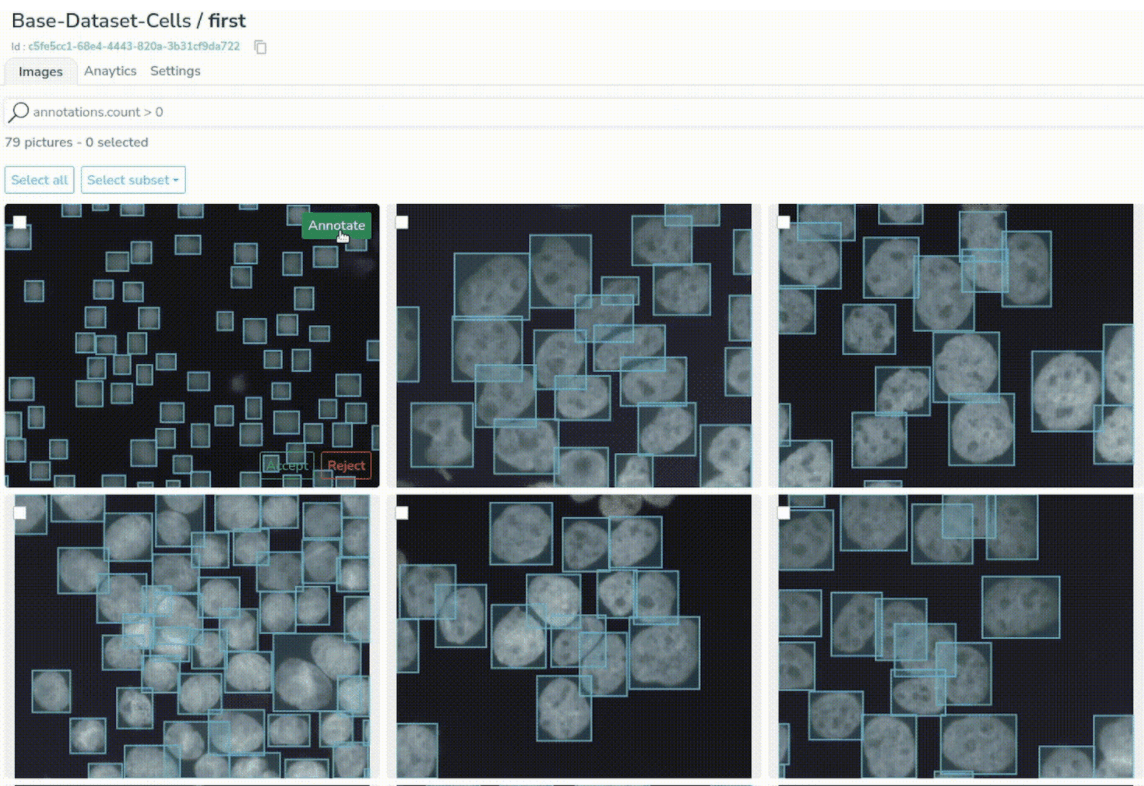

What a unified system looks like

The fix isn't better integrations between existing tools. It's putting everything in one place.

Compute moves to data, not the other way around

Data stays in centralized storage with queryable metadata. Annotation, visualization, analysis all happen server-side. Results stream to your interface.

No local downloads means:

- Dataset versions stay intact

- Metadata doesn't get lost

- Audit trails work

- Everyone sees the same thing

Built-in visualization for specialized data

If you're working with thermal, multispectral, or medical imaging, you shouldn't need to export files or write custom visualization code to toggle spectral channels or adjust gamma.

Lineage tracking that goes both ways

Every experiment links to:

- The exact dataset version (with content hash)

- Annotation state at training time

- Augmentation parameters

- Hardware config

Every dataset tracks:

- Which experiments used it

- How models performed on it

- Annotation history

This lets you go from "metric dropped" to "here are the images" without switching tools.

Query-based dataset construction

Build datasets with queries, not manual file selection:

dataset = datalake.query(

tags_count=0, # Unlabeled images

metadata={'scene': 'outdoor'},

date_range='2025-Q4'

)

Queries get logged. They're reproducible. They scale. Teams can share them.

What changes when you unify

Teams that move to unified platforms report:

Speed:

- Experiments per month: 8 to 18

- Infrastructure time: 40% of engineering hours down to 5%

- Time from metric anomaly to root cause: 2+ hours to under 90 seconds

Quality:

- Better reproducibility from automatic versioning

- More consistent annotations from centralized QC

- Fewer production incidents from better lineage tracking

Operations:

- Onboarding: 2 days to 20 minutes (one login, one interface)

- Peak season data handling: 4x volume increases without workflow changes

A benchmark for your current setup

Ask yourself: how long does it take to go from a metric degradation alert to viewing the specific images that caused it?

If that requires:

- Opening multiple applications

- Manually correlating IDs across systems

- Downloading data locally

- Writing custom scripts

...your tools are slowing you down.

A modern platform should let you:

- Click the anomalous metric

- See the linked dataset version

- Filter to the problem images

- Examine annotations and metadata

- Launch a corrective training run

All in under 90 seconds.

If you're building in-house

Focus on:

- A unified metadata schema across annotation, training, and deployment

- Centralized storage with role-based access

- APIs for everything so you can automate

- Audit logging for compliance and debugging

If you're evaluating platforms

Look for:

- BYOC (bring your own cloud) for data sovereignty

- Native versioning for datasets, models, and experiments

- Integrated annotation with quality control

- Deployment integration for monitoring in production

The bottom line

Over the past five years, specialized ML tools have multiplied. Teams now have best-in-class components for each pipeline stage. The problem: integration overhead eats an increasing share of engineering time.

For computer vision specifically, where data is large, annotation is labor-intensive, and debugging requires looking at images, unified platforms make a real difference.

The question isn't whether your current toolchain "works." It's what your team could build if they weren't spending 40% of their time on data transfers and context switching.

Related from Picsellia

Automate your ML pipelines

Set up continuous training and deployment with automated triggers, shadow deployments, and feedback loops.

Explore Automated PipelinesOrganize and version your datasets

Version, slice, and manage datasets with full traceability — from raw images to production-ready splits.

Explore Dataset ManagementStay up to date

Get the latest posts on computer vision, MLOps, and AI delivered to your inbox.

Related articles

Top 5 experiment tracking tools for Computer vision

AI is typically achieved through iterative and experimental processes such as changing the model, running multiple experiments, and examining the results.

Why Do Classical MLOps Tools Not Fit Computer Vision?

Computer vision pipelines require a set of processes that are exclusive to Computer Vision. This is where CVOps comes to play.

How to Use Computer Vision in Cancer Research With MLOps

We introduced Picsellia's new features by presenting a cancer cell research real use-case. Learn how we applied computer vision to medical research.