What is OCR? Optical Character Recognition Software Explained

In-depth dive into OCR, from its definition and history to its underlying technologies and the most common (and valuable) applications.

Picsellia Team

·10 min read

Ready to build computer vision?

Go from raw images to production models. Free trial, no credit card, cancel anytime.

What is OCR? Optical Character Recognition (OCR) Software Explained

The omnipresence and exponential growth of information (data) and communication technology (ICT) make digitization a critical pillar in this era. Over time, diverse techniques of interacting with, storing, and retrieving data have evolved. Prior methods, like manual data entry or microfilm, were time-consuming, labor-intensive, or less accurate.

Enabling computers and machines with optical prowess, known as optical character recognition (OCR), revolutionized digitization. It equipped them with human-like visual abilities. This ability enabled them to input, access, transfer, consume, or process data faster and more efficiently, making data digitization seamless. It has grown beyond the mundane idea of scanning and converting a physical document into text format.

This article will provide an in-depth dive into OCR, from its definition and history to its underlying technologies and the most common (and valuable) applications.

What is OCR, and why is it important?

Optical Character Recognition (OCR), as the name implies, recognizes characters optically. The technology enables computers to read text from digital image file formats like camera image files, image-only PDF files, scanned paper documents, printed documents, screenshots, handwritten text, etc., by identifying and recognizing each character in the digital image file. It scans, extracts, and converts characters from digital image files to machine-readable code.

OCR systems streamline workflows and boost productivity by creating an interface connecting the physical and digital worlds. It eliminates the need for humans to interface with data each time manually. This automatic interface allows computers to automate data entry, extraction, storage, analysis, or processing. The benefits of OCR automation for operations and processes involved in using text from digital image files include:

- Decrease in operational errors

- Reduce cost of operation

- Increase in efficiency

- Minimizes human effort

- Speeds up execution time

How does it work?

OCR systems understand visual information by augmenting the working principles of the human eyes and brain. Optical systems have a fundamental design of manipulating light through reflection or refraction. Like the eye, OCR systems adopt the concept of the reflection of light to form an image. Think of how a shadow forms when you project light over an object. A shadow is technically an image reflection of the original object in 2D (lower-dimensional).

The eye encodes this 2D image as electric signals and sends them to the brain for interpretation. The brain decodes the signals to determine the object from the shape of its shadow.

The technology behind OCR

The technology for translating text in images into electronic signals that machines can understand and use is broad. As such, there have been many different implementations of OCR over the years. However, OCR software generally comprises a three-step process of image preprocessing, classification (text detection and recognition), and post-processing. They utilize algorithms ranging from traditional image processing to machine learning or deep learning techniques.

Preprocessing Layer

In a nutshell, this step implements a compression technique on the image that enhances the image's quality for the machine to make sense of and recognize text (characters). OCR software takes 2D image files as input. Irrespective of the stack of preprocessing techniques used, it starts by scanning and copying the image content. The image processing component readjusts the scanned image's orientation and alignment to fit the dimensions and resolution of the scan with a deskewing method.

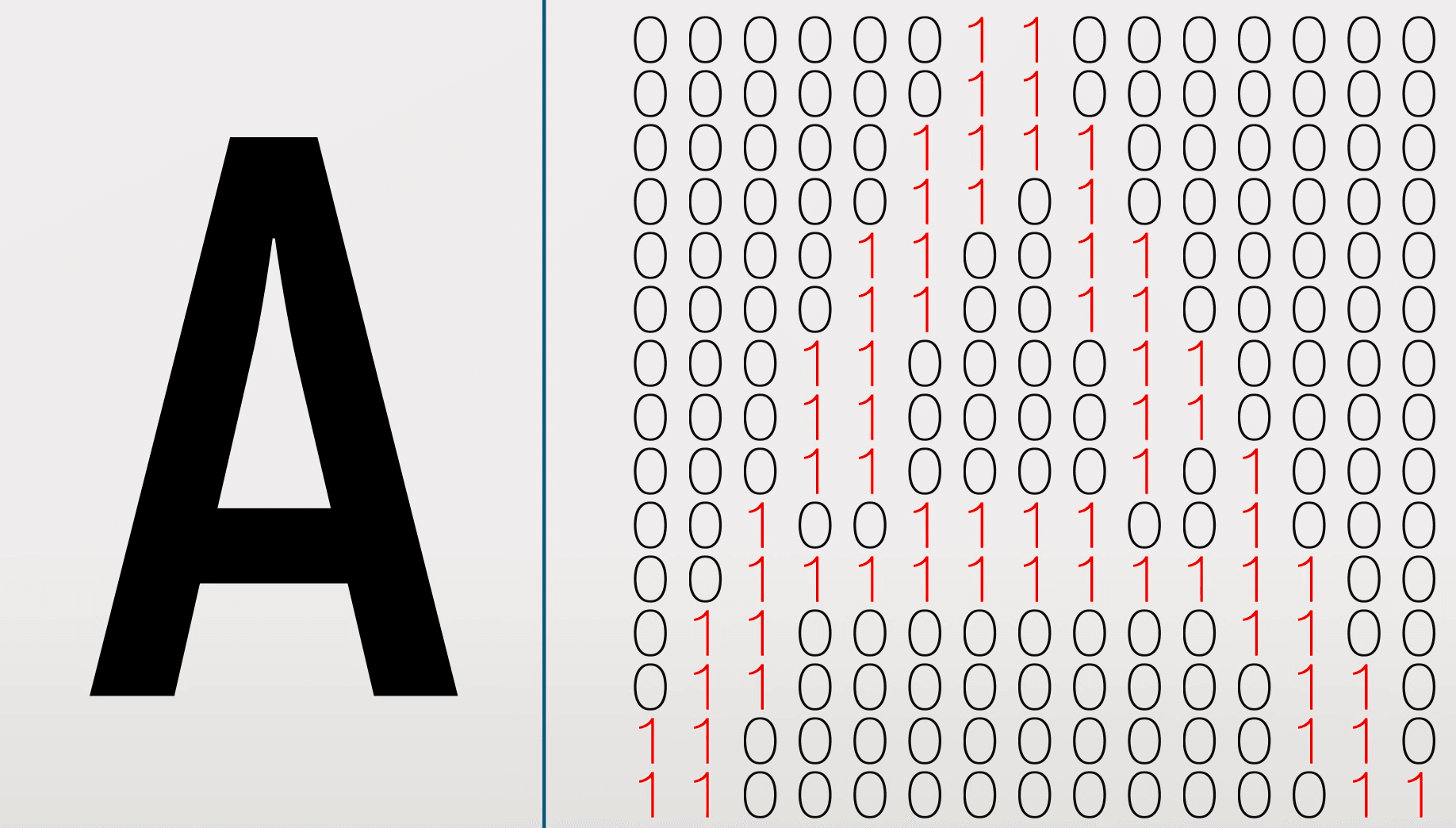

Since pixel (image density) values generally range from 0 to 255 and define the image's resolution, binarizing them to pixel values of 0s and 1s renders the scanned image in high contrast to form a bitmap. A bitmap is typically a lower-dimensional representation of the original 2D image. At this point, the depth (color) of the image is gone, leaving you only with black and white pixels. It's just like a shadow of the original image. White areas denoted by 1s denote the background, whereas black regions denoted by 0s denote characters.

What is ocr optical character recognition software explained

source: How does OCR work? by Aryaman Sharda

What is ocr optical character recognition software explained

source: How does OCR work? by Aryaman Sharda

Binarization also cleans and filters areas of the image with noticeable noise, leaving complex regions untouched. Coupling a noise reduction technique with binarization gives better results.

The last step is to segment the black bitmap pixels that form single-connected components to be analyzed and processed separately. These pixels tend to be the characters.

What is ocr optical character recognition software explained

source: optical character recognition by computerphile

What is ocr optical character recognition software explained

source: optical character recognition by computerphile

Classification

This step passes the single-connected pixels into a classifier that uses either a pattern recognition or feature extraction algorithm for character identification. The level of efficiency in character recognition comes as a tradeoff for the algorithm the system employs.

Pattern recognition identifies characters by analyzing a single connected black pixel at once. It relies on many examples of a character in various fonts and formats to identify characters accurately. It’s most likely to miss characters that don’t have an exact example to match in its database.

What is ocr optical character recognition software explained

What is ocr optical character recognition software explained

Feature extraction identifies characters by analyzing the lines, edges, curves, loops, and strokes formed by a single connected black pixel. This algorithm develops a deeper understanding of the characters, enabling it to handle new examples of a character without needing an exact example.

What is ocr optical character recognition software explained

What is ocr optical character recognition software explained

Post-processing

Downstream, the computer matches the identified character to its respective ASCII code (American Standard Code for Information Interchange). This code produces a digital text of the character as output, which a person or another computer can edit or search digitally.

Early OCR used heuristic algorithms for pattern recognition and feature extraction, which required manual guidance and correction. Sometimes, they could only perform marginally faster than human typing. Advanced recognition, such as multilingual or handwriting style recognition, is now possible with sophisticated algorithms that leverage computer vision (CV) and natural language processing (NLP). They can use grammatical standards to refine recognition by analyzing broader verbal patterns and contextual clues. This type of artificial intelligence (AI) powered OCR is called intelligent character recognition (ICR).

The History of OCR

Edmund Fournier d'Albe created the optophone, one of the earliest electric OCR devices, in 1914. As it scanned the words on a page, the optophone distinguished between the dark ink of text and lighter blank spaces, generating tones corresponding to different letters, allowing blind people to read with some practice.

A few years later, around the late 1920s and early 1930s, Goldberg developed a machine that could convert printed text to telegraph code. It was one of the first technologies to convert printed characters to electrical impulses rather than sounds. He patented it in 1931.

However, it wasn't until 1974 that OCR began to take on a more modern form, starting with Ray Kurzweil, who founded Kurzweil Computer Products, Inc. He created an omni-font OCR that could read text in virtually any font.

Kurzweil later decided that his technology's best use was enabling computers to read text aloud for the visually impaired. The product made use of a text-to-speech synthesizer and a CCD flatbed scanner. Kurzweil displayed the finished product during a January 13, 1976, news conference. Kurzweil Computer Products created the first commercially available OCR software released to the public in 1978. Kurzweil later sold his company to Xerox in 1980.

However, OCR technology gained widespread popularity during the early 1990s. By the 2000s, OCR had become accessible via the web, the cloud, and mobile devices. Today, OCR has more versatile capabilities, from automated data entry from text images to language translation.

OCR use cases across Industries

OCR powers various domains of well-known technologies you come in contact with daily. Some use cases of industries applying OCR include:

Forensics

Handwriting forensics analysis is a field in forensics that examines handwriting to determine its authenticity or trace its authorship. Handwriting analysts gain an advantage from optical character recognition (OCR) since it converts handwritten text into machine-readable text that the machine can automatically examine. Handwriting analysts can use OCR to compare handwriting samples and spot writing patterns quickly and reliably. Analyzing ransom notes, signatures, falsified papers, identifying handwriting, authenticating documents, and studying medical records are some of the many uses for optical character recognition (OCR) in handwriting forensics.

Healthcare & Biotech

Accuracy and patient care are delicate and fundamental in the health industry. OCR enables effective management and analysis of patient information to provide the best possible care. With OCR, digitizing patient records such as medical charts, lab reports, and imaging results is accurate, efficient, and safe. OCR also helps accelerate research and development in the health industry. To better understand diseases and conditions—and to produce new or improved medications, vaccines, and treatments— researchers can easily retrieve quality data from a specific set of patients or scientific and technical journals.

Logistics

OCR is used in the logistics industry to automate the processing of documents like bills of lading, shipping labels, and customs declarations. It enables the processing of documents in a short amount of time, thereby increasing precision and productivity and reducing costs for businesses. Other extensive applications of OCR in logistics include warehouse management, transportation management, and customer service.

Utilities and infrastructures:

OCR reads meter data in the utility industry to automate invoicing and keep tabs on energy and water usage. It can also decipher construction drawings like blueprints. Other applications of OCRs in the infrastructure and utility industries are automating customer care and data collection for analytics.

Manufacturing

Automating critical monotonous tasks with OCR makes manufacturing businesses more efficient, profitable, and sustainable. Quality control is a vital manufacturing task for inspecting product compliance and quality standards. OCR runs automated inspections on manufactured products for information like serial numbers, labels, barcodes, VINs, etc. Other tasks OCR manages in manufacturing include inventory management, predictive maintenance, shipping, and receiving.

Defense & Aerospace

Many operations in the defense and aerospace industries rely on security/cybersecurity. For safety and data protection, OCR enables the automatic processing of visas, passports, and travel applications. It eliminates manual authentication errors, enhances information processing, and reduces fraud at border security and military sites. The defense industry can also extract text from intelligence sources, such as satellite photos and aerial photography, to track enemy movement, identify potential dangers, and organize military operations. Other aerospace applications include automating the processing of logistical documents like shipping manifests and inventory data.

Automotive

OCR is crucial for autonomous vehicles because it enhances their ability to perceive and understand their environment as humans do. It is essential for their optimal navigation. One primary application of OCR in self-driving cars is the recognition of traffic signs like stop signs, yield signs, speed limit signs, etc.. Part of identifying different types of traffic signs includes reading the information displayed on them, such as the speed limit or the direction to turn. OCR can also recognize lane markers and other road elements.

Conclusion

OCR has advanced from its humble beginnings to becoming a critical tool in the digital landscape. It has revolutionized how we interact with data, enabling us to unlock the immense value of physical and digital data efficiently.

OCR systems are becoming more accurate and adaptable as AI/ML algorithms improve and quality data becomes more widely available. We expect more advancements in numerous areas as OCR technology evolves.

Related from Picsellia

Ship vision AI 10x faster

Picsellia is the end-to-end MLOps platform for computer vision — from data management to production deployment.

See the PlatformStay up to date

Get the latest posts on computer vision, MLOps, and AI delivered to your inbox.

Related articles

From Computer Vision to Industry 4.0: How Scortex Is Shaping Automated Visual Inspection

Discover how Scortex leverages AI and computer vision for automated visual inspection, from defect detection to anomaly detection and real-time insights.

Mastering Data Annotation for AI Projects in 2025

This article will discuss the importance of data annotation in AI and the best practices and strategies for overcoming labeling hurdles.

2025 Trends in Computer Vision: What to Expect

Learn about the upcoming Computer Vision trends in 2025.